Transcripted Summary

What is continuous testing? I searched for this term on the internet, and as is so often the case when it comes to these kinds of terms, well, I came across multiple sites providing multiple definitions.

So, let's have a look at the Wikipedia definition. This one says:

Quote

Continuous testing is the process of executing automated tests as part of the software delivery pipeline to obtain immediate feedback on the business risks associated with a software release candidate.

Hmm. Well, I looked further.

Bas Dijkstra, a test automation professional, elaborates a bit more on this topic. He says:

Quote

Continuous testing is the process that allows you to get valuable insights into the business risks associated with delivering application increments following a CI/CD approach. No matter if you're building and deploying once a month or once a minute, CT allows you to formulate an answer to the question, 'Are we happy with the level of value that this increment provides to our business, stakeholders, end users?' for every increment that's being pushed and deployed in a CI/CD approach.

Okay, that's quite informative already.

Jez Humble puts it as follows:

Quote

The key to building quality into our software is making sure we can get fast feedback on the impact of changes. [...] In order to build quality in to software, we need to adopt a different approach. Our goal is to run many different types of tests, both manual and automated, continually throughout the delivery process.

Now in his book, Continuous Delivery, together with Dave Farley, they sum it up to the following:

Quote

Testing is a cross functional activity that involves the whole team, and should be done continuously from the beginning of the project.

Well, that's quite enlightening.

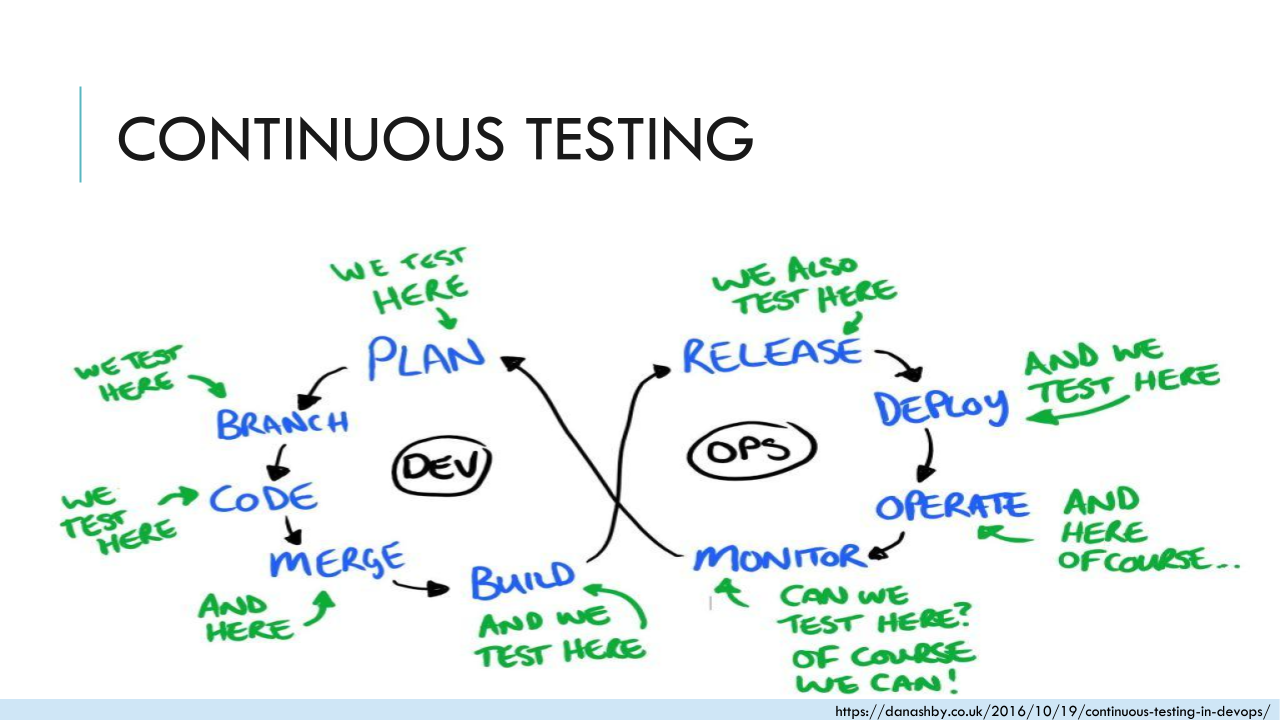

Dan Ashby, another well-known person in the testing world, nails the core of continuous testing in his model where testing fits in a DevOps world, which is basically everywhere.

For me, testing fits at each and every single point in this DevOps model.

I'd like to stick with this image for this course.

# Why should we care?

The presented definitions already tell us a lot here. There are many benefits resulting from testing all the time, and some of them are the following:

First of all, you get fast feedback at any stage, therefore the automated tests need to be included in the build pipeline, and the build needs also to fail as soon as problems are detected.

Another thing is that you can continuously learn about your product, and the quality expected.

Consequently, it's also speeding up development by reducing blocked time, and it's also limiting a work in progress and the context which is needed. This way you can benefit from potential earlier releases, and therefore, you also have a faster time to market.

Continuous testing also increases confidence we're going in the right direction. Therefore, we reduce waste by learning early.

Not to forget, all these benefits increase team morale.

For all of this, close, and especially constructive, collaboration is needed.

In product development, we have a lot of feedback loops. Whenever you can, shorten them. Only with feedback, we can learn, and the earlier we learn, the earlier we can adapt. It's all about failing fast so we can learn fast, and this applies to the whole work flow.

What's described in the following is based on cross-functional product development teams. So, you have all skills required in the team, no matter how the roles are defined. In case you have another context of teams, like teams of programmers, or a different team of testers, operations people and more, I hope you will still find inspiring ideas in the course.

# What does testing throughout the workflow look like?

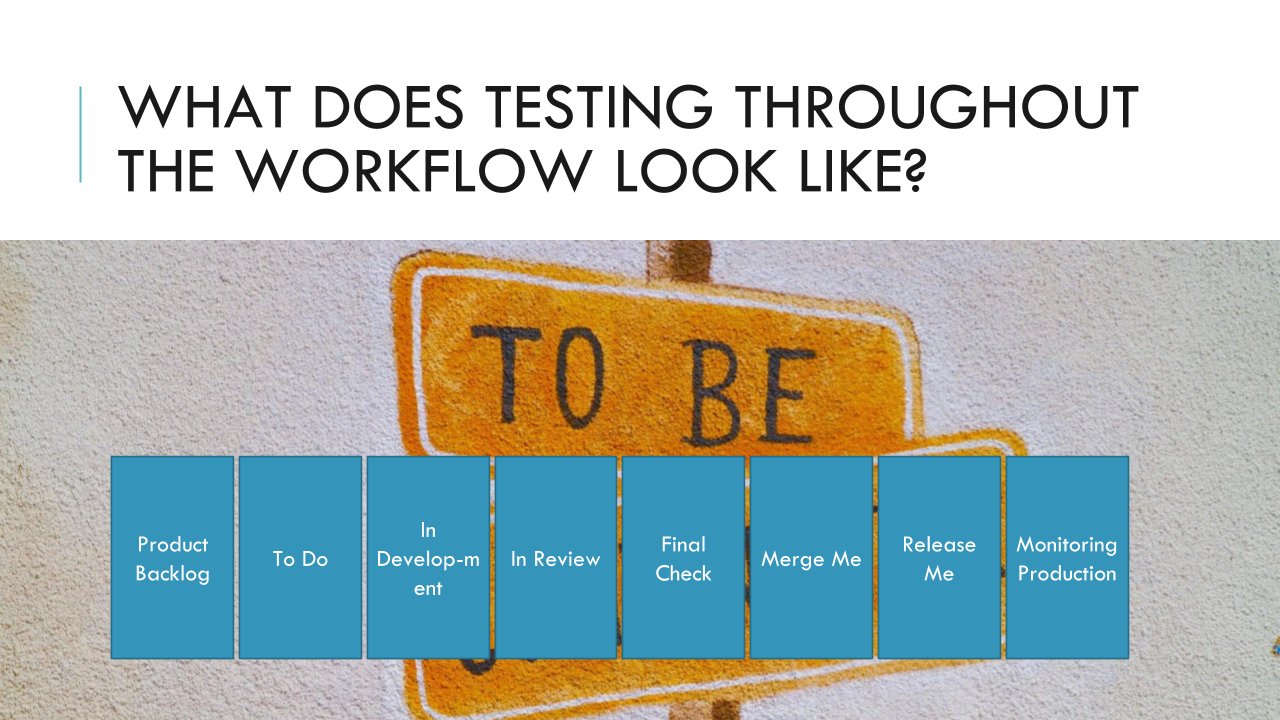

A lot depends on your context, and what is required for your product. You might have a board visualizing your workflow, or part of it, and it might look many different ways. It might have a to do, in progress, done column, or maybe there's an i“in-test” or review column, maybe there’s one for code review, or for release, or anything else.

The key is that whether you have a testing column or not, testing is not a phase in the end; instead it should take place throughout the workflow, and by the whole team, so we can ensure we're really building quality in from the start and until the end, and even further, when our changes are already on production.

Now here's an example workflow.

You can test the story idea already when it's in the product backlog before any line of code is written, just by asking questions. Here you can already identify a lot of unknown risks, and also build that shared understanding you will need later on.

Now when the story is added to the list of what's next to do, you can already prepare test ideas. If you do, share and discuss them. Make sure everybody is on the same page and has a clear understanding. I tried that by creating mind maps with my task ideas and questions, and then I worked them through with a developer and product owner. So, at this phase, it would already be a great time to kick a story off by having three amigo sessions, and illustrate behavior by examples.

When a story is in development, you can test early increments early on to provide faster feedback. Many teams told me, "This story is not ready for testing, yet." It didn't even pass code review, so there will be lots of changes anyway. The thing is, it changes all the time. So, in my experience, it's a lot better to test small chunks continuously and early, so we can also provide feedback as soon as possible. Another benefit here is that this way, you don't have a huge task at the end, taking a long time. I just did it, and I convinced them with results.

Now when the first implementation version is done, and the story might enter a review phase, make sure to check out the code changes to identify regression issues. It's important to get your hands on the source code, even if you're mostly testing manually, and/or if you're not familiar with coding. The code is just another information source, and you can find issues or potential risks there, just as anybody else.

Now just before the story is about to get merged, there should only be a few final checks left to do. Let's say we haven't found any blockers stopping the release, so we merge it into an integrated product version. We might already deploy to production without releasing it yet in case you have feature toggles in place, so you might test the new version further on production before releasing it to users.

But let's say we released it, which would be fast and safe. Then the testing is still not over because we can still test in production. You can review logs for exceptions, monitor the application for performance issues, usage errors, anything. Have a look at user reports as well, because they also can provide a lot of information of how users use the product, and where they stumble.

Now all those things go hand-in-hand with automation.

Providing fast feedback in all steps, be it integrated in each build like unit or integration tests, or at later stages, in case of end-to-end tests, performance, or load tests, security scan, whatever is needed to catch any regression issue and provide you the confidence you need before release.

Also, automation is needed to free your time to explore for unknown risks throughout the workflow.

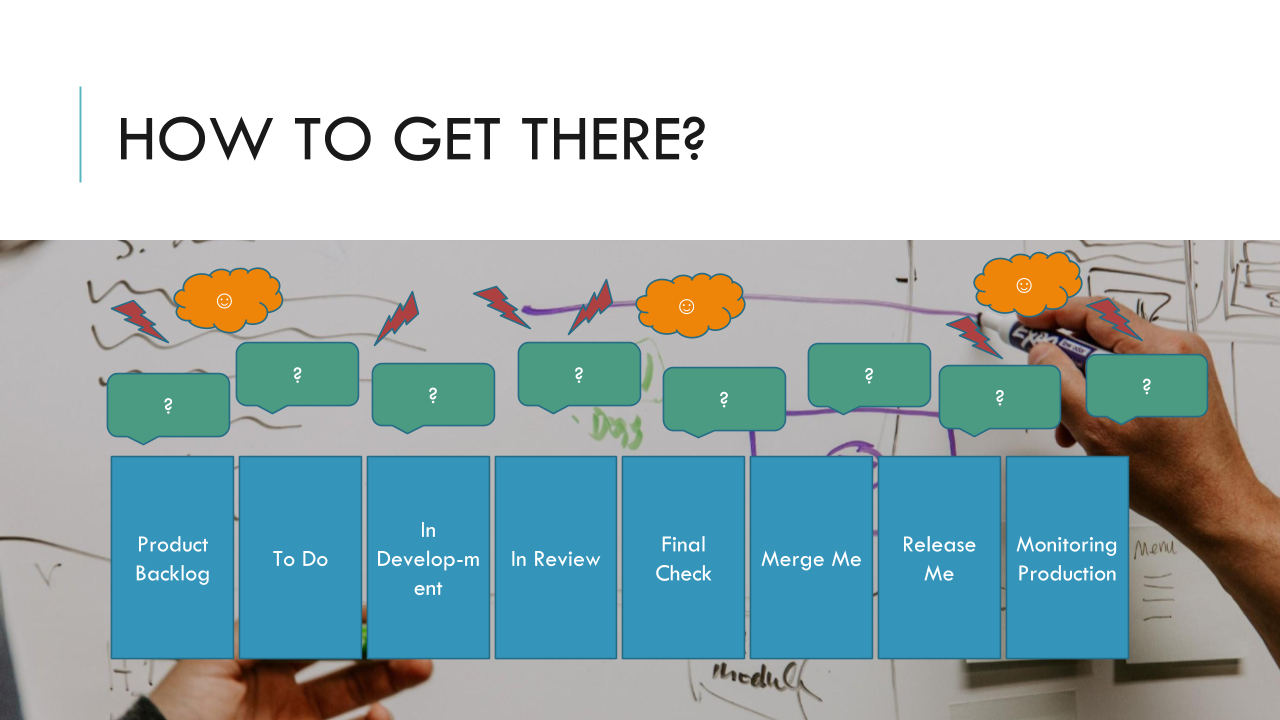

I realize I cannot change everything overnight, though sometimes I wish I could. So, changing culture and mindset takes time. Take step-by-step by running small experiments, which helps here a lot, is to visualize your workflow. I learned that from Abby Bangser, Ashley Hunsberger, Janet Gregory, Lisa Crispin.

It's best to do this exercise together with your team. Look at which steps do you have right now, like these example ones.

Which questions are you trying to answer by testing at which step?

Write them down and then look, where are the current problems?

If you know that, then you can identify what to improve next.

Brainstorm ideas. Where could you shorten feedback loops? Which small experiments to try?

Now in the next chapter, we will have a closer look at the whole team approach to get to continuous testing.