Transcripted Summary

Your test automation strategy will guide your team in how you will organize automated tests into suites and include them in pipeline stages.

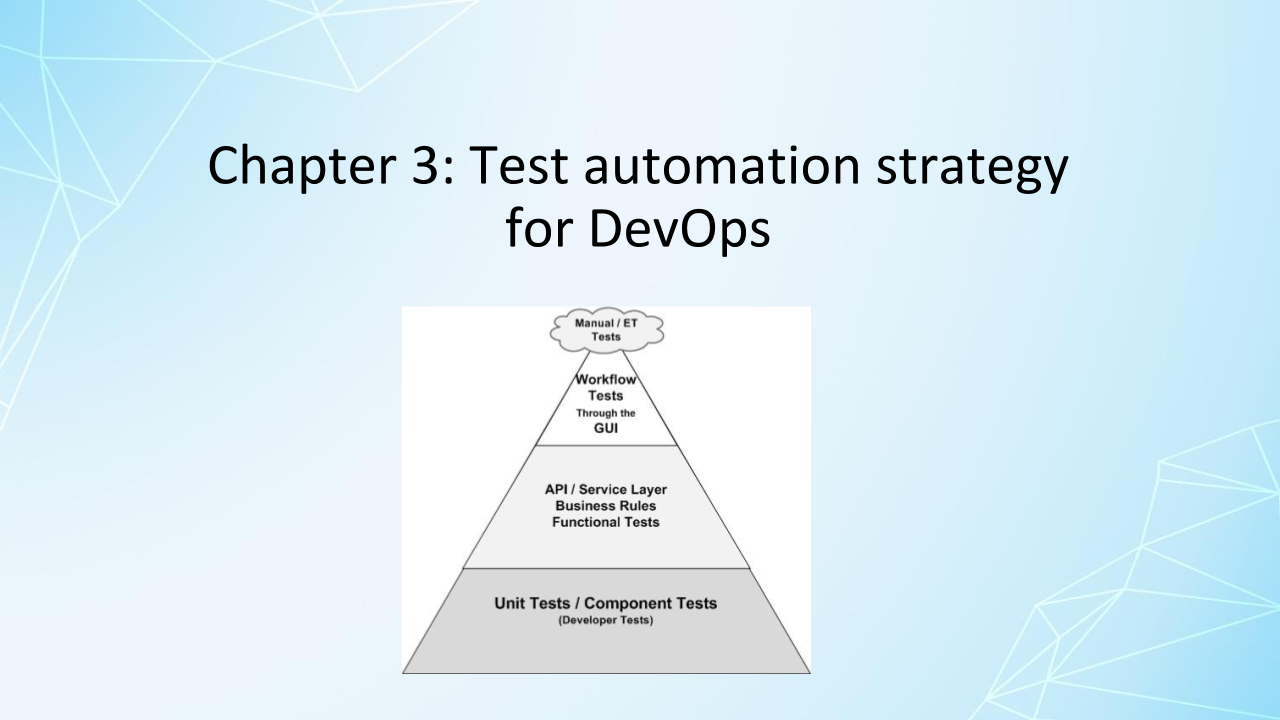

Basic concepts like testing at lower levels, such as the unit level, when possible, as modeled by the test automation pyramid still apply.

But there is more in-depth strategy to consider as you decide what the minimum test reach you need in your pipeline are to succeed with continuous delivery.

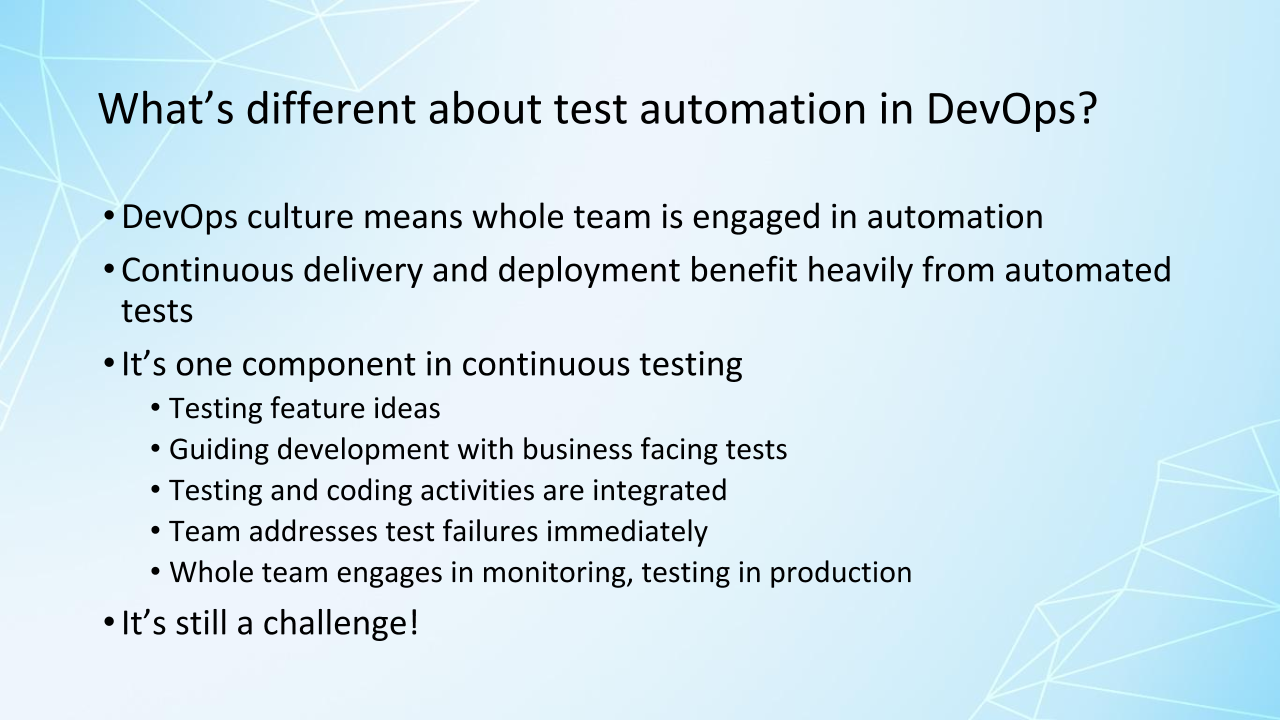

I mentioned earlier that the State of DevOps Survey results show that reliable test automation done by developers with help from testers is correlated with high-performing teams.

That's two different roles working together. And in teams that are successful with continuous delivery, more roles get involved.

Of course, there is more to this than the automated tests that run in the pipelines. On my team, when we needed a new set of UI testing tools because our technology was changing, our system administrator volunteered to do a proof of concept on the driver library we were considering.

Everyone on the team has an interest in automating tests, or they should.

Successful continuous delivery requires continuous testing, and automation is one part of that. We're collaborating across the team to achieve all these aspects of continuous testing.

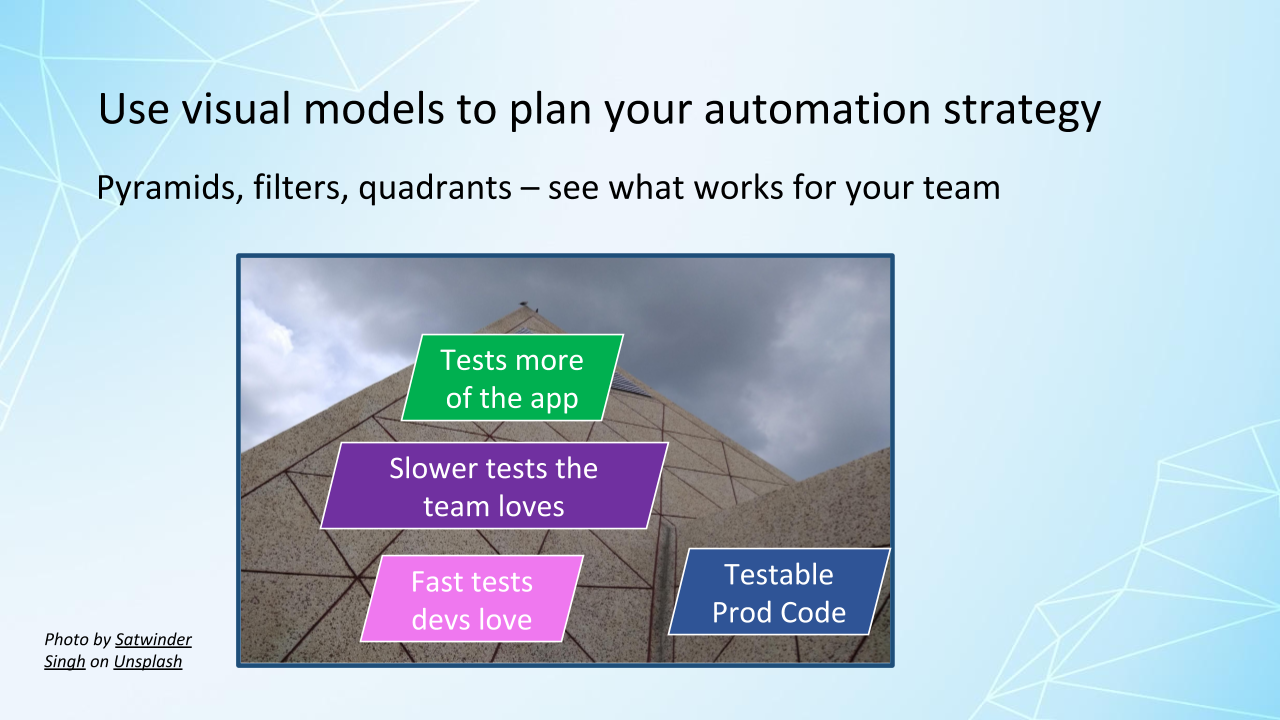

Visual models such as the test automation pyramid can be helpful to explore questions like how to get people in different roles of the team engaged in collaborating to produce testable code.

Having good testable code can prevent a lot of automation problems like flaky tests.

What level to automate tests at? How much of the application does each test have to exercise? We want to minimize the number of layers of the application that each test goes through.

Getting the automated test suites into the pipeline.

Figuring out what tools are appropriate for the team's needs. Skills the team may be missing in order to succeed with automation.

And where to start with automation, based on risk, value to customers, and ease of automation.

I have some links in the resource section for this chapter to other visual models, including Katrina Clokie's in her book A Practical Guide to Testing in DevOps. These visual models are really great for generating conversations about your strategy with your whole team.

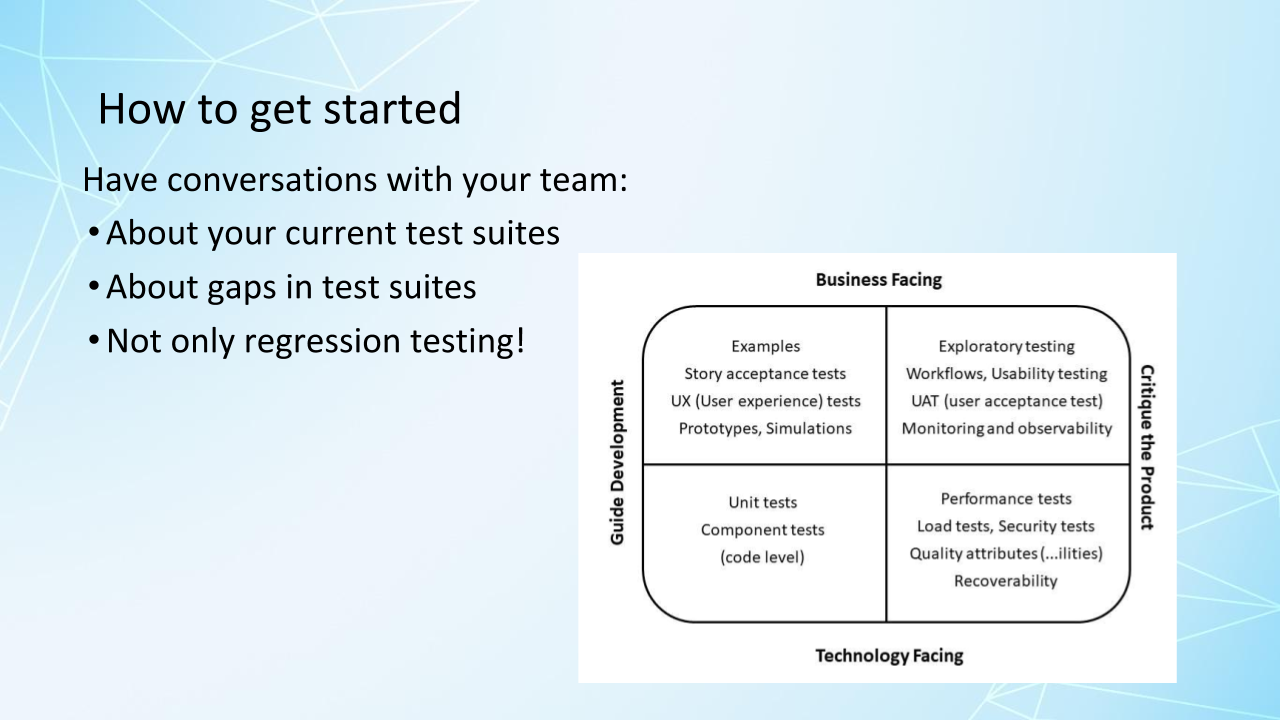

As you're planning your next release, feature, or story, have a conversation with people in different roles on your team about what tests need to be done and which ones should be included in your pipeline.

Performance, security, reliability, resilience, all kinds of tests, not only the automated regression tests, are potentially needed.

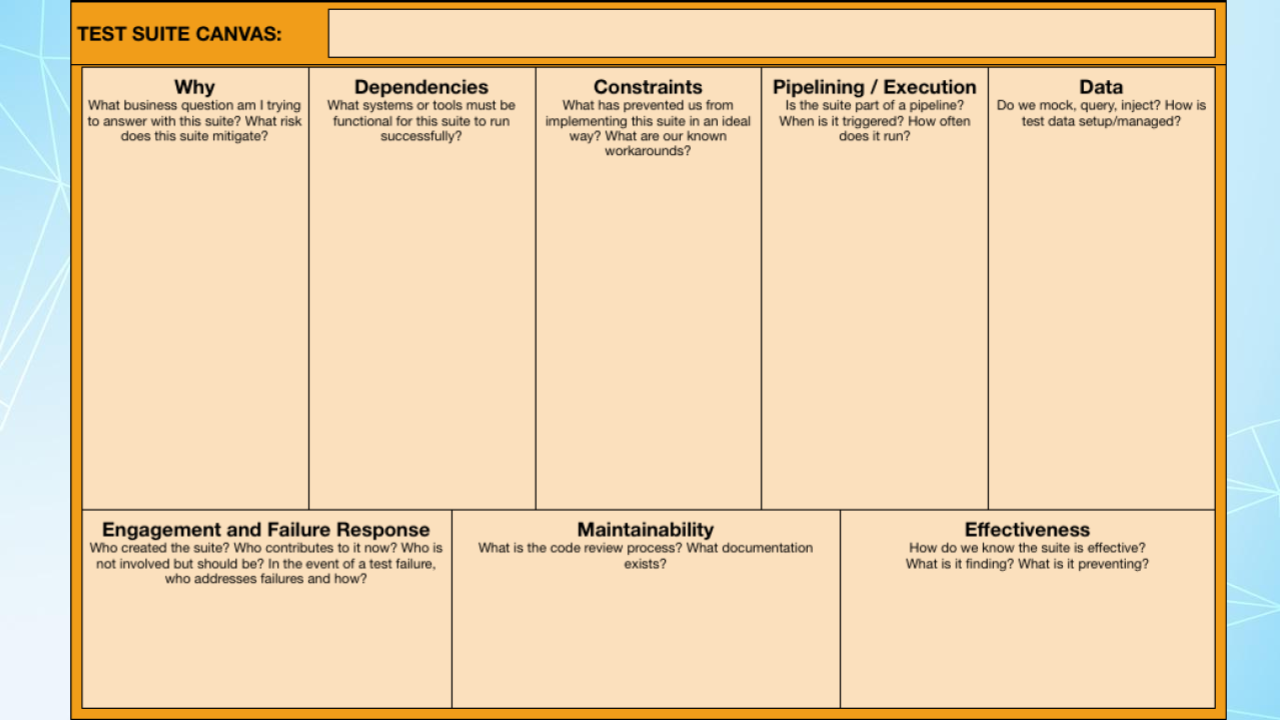

Speaking of having conversations, a great framework for talking about your automated test suites is the Test Suite Canvas from Ashley Hunsberger.

She says that Katrina Clokie inspired her to devise this, and it's really great for evaluating each test suite currently in your pipeline or planning which test suites you would like to add to your pipeline.

There are a lot of different questions here to ask about each test suite. And talking about them will ensure that we have reliable tests that will address failures in a timely manner, that we have good test data, that we have the right automated test stages to give us confidence for getting those small changes to production frequently.

It helps the whole team collaborate to make sure we have effective pipeline stages. I've got a link to this canvas that you can download in the resources section for this chapter.

These days, teams try to avoid big design upfronts and analysis paralysis, but having conversations about all the points in the test canvas for each automated test suite you will think of is going to reveal obstacles in your way that you can anticipate and make sure that you have the skills you need and the tools you need to address those.

It may be that you need to get some additional expertise on your team, get some training, get someone in who can ramp up certain skills.

Maybe you need a database expert to help you create test data.

Maybe you need to integrate your CI output with a communication platform like Slack, and you don't know how to do that.

Maybe your team has had so many test failures from flaky tests that you've developed alert fatigue, and people just aren't paying attention anymore to the failures.

How can you start discussions with your team?

I'd like you to pause the video for a few minutes and think about how you could get people on your team engaged in talking about the Test Suite Canvas and talking about your test suites.

Now, on my previous team, we did have a problem with kind of like alert fatigue, and part of it was just people being heads-down and not noticing.

We had big monitors on our wall with our continuous delivery pipeline status, and when it failed, it would light up red, and people should notice it. But people were heads-down working and not noticing it. If you're trying to deploy every day, and it's afternoon, and you're deploy pipeline is running, and it takes longer than you'd like it to to begin with, and it fails and nobody notices it for an hour, you're not going to deploy that day.

So that was a big problem for us.

We all got together and brainstormed, how could we make sure people noticed the failures? We took a pretty drastic step, and we actually hooked a flashing police light up to our production deploy pipeline, and if the test suites failed, the police light went off and flashed until somebody on the team took responsibility for investigating the failure and making sure it got fixed.

They didn't have to fix it themselves necessarily, but they had to make sure it was fixed in a timely manner, so that we could get our deploys out. This was an experiment. If it wouldn't have worked, we would have tried something else.

But again, testing problems have to be team problems, and then with all the different skills on your team, you'll be able to solve these problems.

Now, if you're watching this, and you don't have any automated tests yet, you just have to get started.

Find out from the business stakeholders, what are the most critical parts of the application that have to work, that you have to cover with automated tests? Think about what's valuable to the customer, as well as what's riskiest for the business.

And if your code is not easy to test, or it's difficult to automate tests for your code, think about developing the next new feature in a more testable way, and start automating there.

Again, invest time in creating your strategy, choose the tools that are appropriate for your context, learning how to use those tools.

The diversity of skills on your team is critical here. Test automation is coding, and your team already has programmers. They can collaborate with testers and product owners and other stakeholders to specify and automate the tests.

The most successful teams I worked on, who were successful at continuous delivery, did test-driven development at the unit level and used business-facing tests to guide development at higher levels, such as behavior-driven development or sudden test-driven development. This is a big investment to make, but it helped us be successful with continuous delivery.

And again, if you're starting from no automation, it's a steep learning curve.

Manual regression testing before each release is painful and takes a lot of time, so I've found that while the team is learning how to automate at the unit level or learning test-driven development, we can also automate some UI smoke tests using a simpler automation tool, just to fill the gap, just to save us time so we can learn these other skills and really flesh out our test automation to go with whatever model we've chosen, such as the test automation pyramid. And that gives us time to get traction on things like test-driven development.

It's really important that the team gets those first tests into the pipeline.

So, if somebody has just created an API feature and has an automated test to go along with it, they should go ahead and create an automated test suite, even if it only has one test in it. And then the next person who works on a feature can add their test to this existing suite, and they've already got an example of a working test to help them learn how to write the test.

Now, this is a really great way to get your test suite started, get your pipeline stages going.

I'm seeing a lot more teams with older code bases being asked to implement continuous delivery, to start using DevOps practices, and it's a problem, because they don't necessarily have any test automation.

It can be really hard to get started with an older legacy code base.

So, one option that my teams have used that was successful is the Strangler pattern.

The team designs a new architecture that makes it really easy to automate tests, and all of our new features we build in that new architecture. And of course, we have to have a bridge between the new architecture and the old architecture, but over time all that old code will eventually be replaced. That's one good strategy.

You can also refactor your legacy codes, so don't change the functionality of it, but redesign the code to make it easier to maintain and easier to automate tests for. And then as you develop new features and new bugs, and fix bugs, you can automate tests and build up your pipeline.

Get your team members together frequently and use the Test Suite Canvas to review your automated tests.

My own team still has a few tests that developers have to remember to run locally, because they aren't in the pipeline yet. That's bad, because they could forget.

So, respect the tests. Make sure they get into the pipeline, and make sure once they're passing that they keep passing.

There are a lot of considerations about test automation that we're not going to get into here. For example, choosing the right tools for your context or analyzing test failures. So, there's a lot to learn with this, but again, these reliable automated tests that the whole team works on together are critical for continuous delivery success.

Again, there are some resources for more test automation models to help you with your strategy and the Test Suite Canvas.

In the next chapter, we'll look at cloud infrastructure and how it can speed up your automated test suites.

Resources

Setting a Foundation for Successful Test Automation by Angie Jones – TAU Course

The Whole Team Approach to Continuous Testing by Lisi Hocke – TAU Course

Modeling Your Test Automation Strategy: Part 1, Part 2, Part 3 by Lisa Crispin (on the TestGuild blog)

A Practical Guide to Testing in DevOps by Katrina Clokie (“Rethinking the test pyramid” section)